Tempe police release video of self-driving Uber crash; focus switches back to XC90, backup driver

By onLegal | Repair Operations | Technology

Tempe, Ariz., authorities have released some footage from the self-driving vehicle which killed a pedestrian Sunday, and the video reopens the possibility that some combination of the autonomous Uber SUV or the backup driver might be at fault.

Yesterday, it appeared that Uber and its backup driver might be exonerated; Police Chief Sylvia Moir told the San Francisco Chronicle it appeared that victim Elaine Herzberg, 49, stepped out in front of the vehicle too quickly for the AI or human driver to detect.

“It’s very clear it would have been difficult to avoid this collision in any kind of mode (autonomous or with a human) based on how she came from the shadows right into the roadway,” Moir told the Chronicle.

Automotive News has since reported comments from a Tempe police spokesman describing Moir’s comments as mischaracterized.

Full statement on why video contradicts police chief Moir’s comments to SF Chonicle: pic.twitter.com/62IjwHyPLj

— Katie Burke (@KatieGBurke) March 22, 2018

“While we recognize two media outlets have attributed exclusive interviews with the chief, we respectfully disagree with how their claim of interviews have been characterized,” spokesman Sgt. Ronald Elcock said in a statement.

The video shows the backup driver looking down before being startled by the impact, and Herzberg clearly walking a bicycle perpendicular to the vehicle, neither of which speaks well about the Uber system’s performance.

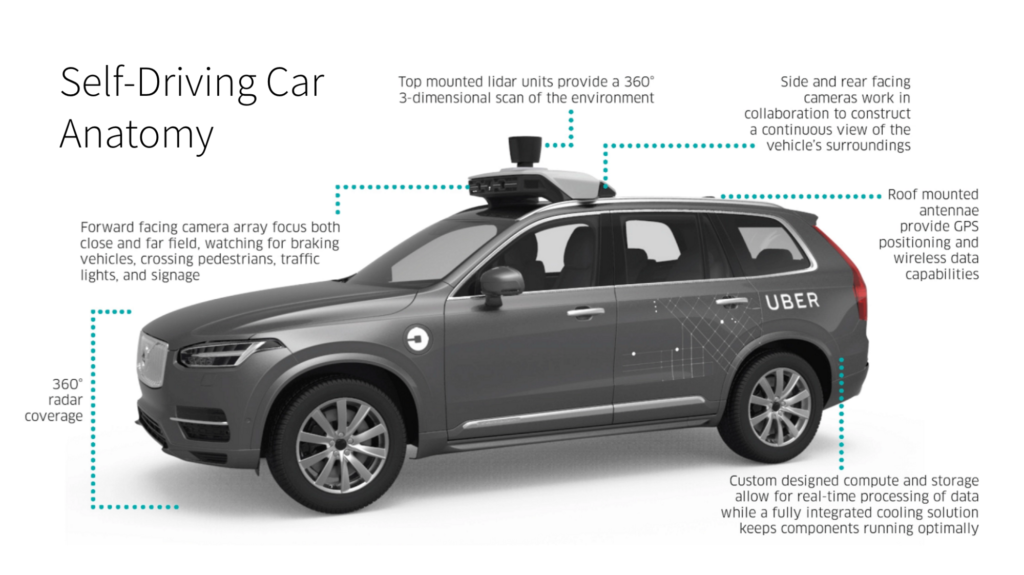

While a camera might have been tripped up by the nighttime scene, the system also has lidar and radar.

Granted, while lidar can ostensibly “see in the dark,” the technology has difficulty “seeing” items with low reflectivity, according to Axalta and Luminar experts at the 2018 Megatrends Detroit. Herzberg appears to have been wearing a dark shirt or jacket and blue jeans. However, radar is under no such constriction (it uses radio waves, not lasers; the physics are different). Its constraint, we’ve heard, is that while it can see the existence of an object, it has difficulty identifying what the object is. (You can see why pairing cameras, lidar and radar is a useful way in theory to cover all of one’s bets.)

Distance can play a role depending on the quality of the vehicle’s radar and lidar — detecting something and deciding what to do about it is constrained at some point by the speed of light and the time it takes the autonomous “brain” to calculate what to do. However, it’s hard to see how this would have been a factor in this incident.

Continental, for example, sells long-range radar for normal passenger cars that can see up to 250 meters depending on field of view, and a popular lidar Velodyne “Puck” can “see” 100 meters. The Chronicle reported the XC90 was traveling 38 mph in a 35 mph zone, though Jalopnik reported that based on Google Maps, it might have been a 40 mph region of the road. A 38 mph vehicle covers 16.99 meters per second.

Wired cited an autonomous vehicle expert who argued that the car should have been able to detect Herzberg.

“I think the sensors on the vehicles should have seen the pedestrian well in advance,” University of California, Berkley research engineer Steven Shladover told the magazine. “If she had been moving erratically, it would have been difficult for the systems to predict where this person was going.”

The Associated Press quoted Navigant Research analyst Sam Abuelsmaid, who agreed: “It absolutely should have been able to pick her up. From what I see in the video it sure looks like the car is at fault, not the pedestrian.”

“The video is disturbing and heartbreaking to watch, and our thoughts continue to be with Elaine’s loved ones,” an Uber spokesperson told the magazine.

Wired also wondered if an autonomous backup driver could really be expected to maintain an acceptable level of focus for their entire shift, an interesting point. We’ve covered earlier the issue with human-machine handoffs — humans require a few seconds to get their head in the game, so to speak.

Such questions surrounding the testing might dictate how quickly the technology comes to market and begins to affect collision volume.

BMI Research on Wednesday observed that the collision having occurred in “less-regulated” Arizona might prompt nationwide standards and greater regulatory scrutiny:

Legislation on autonomous light vehicles and their commercialisation in the US, has until now only been subject to a patchwork of state level regulations that differ across the country. AV developers have flocked to states like Arizona, which have arguably less stringent rules on the use of AVs and fewer requirements for making test results publicly available. However, with the accident having occurred in the less-regulated Arizona area this may now fuel the argument that a more strict and national level legislation is required in order to stop a legislative ‘race-to-the-bottom’ where states relax key legislation in order to attract AV investments. This is in line with our key view for 2018 that autonomous vehicle regulation would be sharply ramped up over the year.

It’ll also be interesting to see if it affects systems like the CT6’s “Super Cruise” now being sold today to consumers as hands-free autonomous. That system can only be used on more than 130,000 miles worth of specific “limited-access freeways that are separated from opposing traffic,” according to Cadillac. However, unlike the Uber self-driving XC90, the cars shouldn’t encounter many issues with pedestrians on these roads, removing a major autonomous vehicle challenge. (We’re talking on- and off-ramp freeways only here; any pedestrian on these roads is probably breaking the law.)

The system is interesting because it appears to be the first publicly available hands-free self-driving option (the cheapest version is a $5,000 upgrade to the $65,295 Premium Luxury trim), and a CT6-certified shop (or even an uncertified repairer doing cosmetic work) could theoretically encounter a vehicle with it tomorrow — it was available optional on all Premium Luxury trims and all Platinum trims made after Sept. 6, 2017.

Unlike Tesla’s AutoPilot, Cadillac says you can take your hands off the wheel when Super Cruise is active what the OEM calls performing “common tasks in the car, such as using the navigation system, adjusting the audio system or taking a phone call.”

However, it still requires the driver to monitor the road, and software will monitor you with an interior camera and alert you if it deems you’re paying too little attention. If a driver seems incapacitated, the car will stop itself and alert authorities with OnStar.

More information:

“United States – Implications Of Uber’s Self-Driving Fatality”

BMI Research, March 21, 2018

“UBER VIDEO SHOWS THE KIND OF CRASH SELF-DRIVING CARS ARE MADE TO AVOID”

Wired, March 21, 2018

Cadillac CT6 Super Cruise website

“Cadillac Super Cruise™ Sets the Standard for Hands-Free Highway Driving”

Cadillac, April 10, 2017

More information:

Tempe authorities have released some footage from the self-driving vehicle which killed a pedestrian Sunday, and the video reopens the possibility that some combination of the autonomous Uber SUV or the backup driver might be at fault. (Screenshot from Tempe police video from autonomous Uber Volvo XC90)

Technology found on a self-driving Uber Volvo XC90. (Provided by Uber)